Image segmentation with StarDist#

StarDist is a deep-learning based image segmentation method for segmenting objects such as cell nuclei.

See also

As usual, we start by loading an example image.

from stardist.models import StarDist2D

from csbdeep.utils import normalize

from stardist import random_label_cmap

import stackview

import matplotlib.pyplot as plt

import numpy as np

from skimage.data import human_mitosis

image = human_mitosis()

stackview.insight(image)

|

|

|

Loading a model#

Models are files that typically contain a neural network which is capable of segmenting an image. StarDist comes with some pretrained models for demonstrating how the algorithm performs on a general use-case such as nuclei segmentation. If such a pretrained model does not perform well on your data (be a good scientist and check that carefully!), you need to train your own model which fits to your data. For training, you will likely need a powerful GPU, for applying a model (prediction step) to a 2D image no GPU is necessary.

# creates a pretrained model

model = StarDist2D.from_pretrained('2D_versatile_fluo')

Found model '2D_versatile_fluo' for 'StarDist2D'.

Loading network weights from 'weights_best.h5'.

Loading thresholds from 'thresholds.json'.

Using default values: prob_thresh=0.479071, nms_thresh=0.3.

Normalizing the input image#

Many algorithms using neural networks need normalized input data to work on. For example, you can determine the 1% and the 99.8% percentile (that’s very common) and normalize your image so that the intensities spread between these percentiles are afterwards in the range between 0 and 1. We need to do this because the model was trained on an image in this range and might not be able to segment images with different intensity ranges.

axis_norm = (0,1)

image = normalize(image, 1,99.8, axis=axis_norm)

Segmenting the image and labeling the individual objects is often called “instance segmentation” or “prediction” in the artificial intelligence community.

labels, details = model.predict_instances(image)

stackview.insight(labels)

1/1 [==============================] - 0s 127ms/step

|

|

|

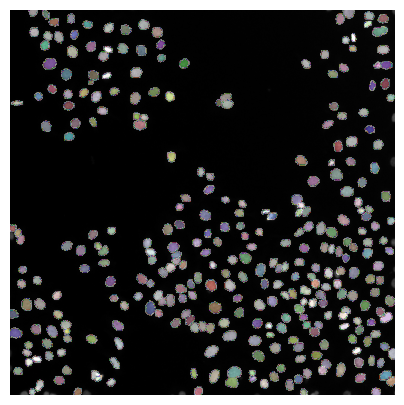

Result visualization#

Cell / nuclei segmentation results can be checked best if the resulting label image is overlaid to the original image

plt.figure(figsize=(5,5))

plt.imshow(image, clim=(0,1), cmap='gray')

plt.imshow(labels, cmap=random_label_cmap(), alpha=0.5)

plt.axis('off');

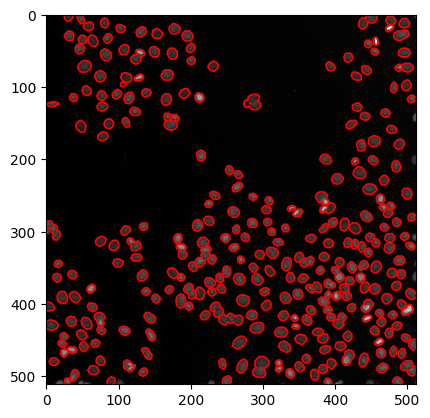

… or by drawing outlines around segmented regions.

# create a new plot

fig, axes = plt.subplots(1,1)

# add two images

axes.imshow(image, cmap=plt.cm.gray)

axes.contour(labels, [0.5], linewidths=1.2, colors='r')

<matplotlib.contour.QuadContourSet at 0x22b34c4f1f0>

Note: The model we applied here to human_mitosis was not trained on it. The result doesn’t look so bad though.

More available pretrained models#

StarDist offers more available pretrained models.

StarDist2D.from_pretrained()

There are 4 registered models for 'StarDist2D':

Name Alias(es)

──── ─────────

'2D_versatile_fluo' 'Versatile (fluorescent nuclei)'

'2D_versatile_he' 'Versatile (H&E nuclei)'

'2D_paper_dsb2018' 'DSB 2018 (from StarDist 2D paper)'

'2D_demo' None

Exercise#

Load ../../data/blobs.tif and apply StarDist to it.